When Computers Talk, Part 1

AI chatbots continue the tradition of anthropomorphizing computers

Time Magazine selected a computer for its annual “Man of the Year award” in 1983. Personal computers were available for purchase by US households (about 8% of households owned one). However, computers in 1983 had steep learning curves, malfunctions, very limited storage capabilities, and minimal uses of graphical user interfaces, in addition to being very expensive. Deceasing cost and increasing appeal, ease of use, and “friendliness” of computers went a long way to widespread adoption. Giving human characteristics to computer and software were part of this marketing strategy.

Making Computers Appealing

The “talking” computer is a sci-fi trope. Characters “talk” (often verbally or sometimes through simple prompts) and receive answers. “Hal” in 2001: A Space Odessey is a well-known example. The first time I noticed a “talking computer” was in the sitcom The Facts of Life. In the episode Dear Apple, Jo (Nancy McKeon) seeks advice from a computer about her friendship with Blair (Lisa Whelchel). The episode is a bit weird, and, as a kid, I wondered why computers did not have this ability in real life. However, computational capabilities have rarely been portrayed realistically in media. Movies like Sleepless in Seattle (1993) have characters accessing large databases that didn’t even exist or No Way Out (1987) has unrealistic image enhancing for the time period. These portrayals can make computers more appealing to the tech-averse, since the characters can easily use them and solve their problem. They also center the computer as a type of “character” in the movie.

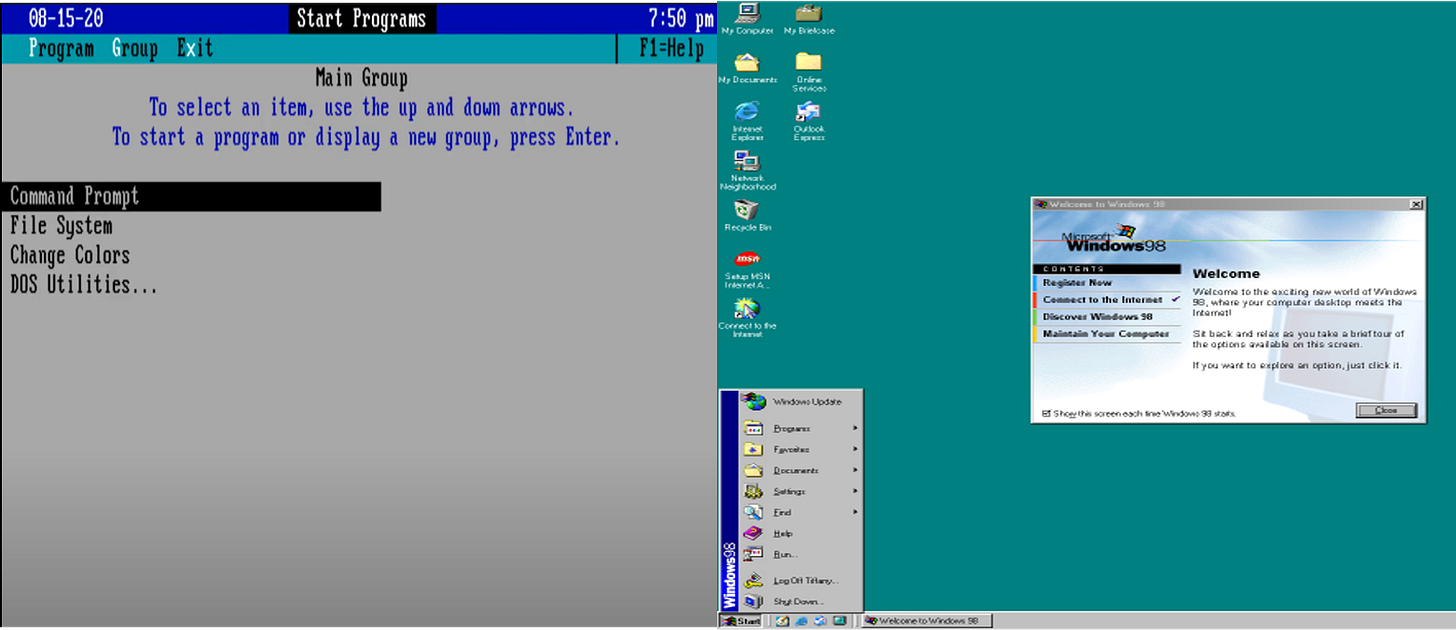

Anthropomorphizing computers is a marketing strategy. Perhaps scarred by George Orwell’s creepy computers in the novel 1984, tech companies were eager to brand themselves as user friendly and non-threating (i.e., we won’t spy on you or steal your data). The most infamous example of this was Apple’s 1984 commercial in which computers were presented as liberators, not oppressors. Software programs, and computer models often had “friendly” human names like “Lisa”, “Siri”, (Apple), “Watson” (IBM), or even “Copilot” (Microsoft). AOL even added pre-recorded messages (“Welcome, You’ve Got Mail!”) to their software. However, even in the 1980s and 1990s, there was a steep level of technical mastery required to operate a computer since modern desktops with Graphical User interfaces were not common until the late 1990s. Being able to verbally prompt the software to do a task was a long way off, a mere fantasy. In fact, my earliest interactions with computers in the 1990s involved navigating the system through keyboard commands and not a mouse. Decades of positive PR and efforts to increase ease-of-use has encouraged the widespread adoption and use of computers in almost every aspect of our lives. Now, computers can simulate human conversations through AI Chatbots. Users can type or talk back and forth with the bot and get responses, mimicking conversations.

LEFT: MS-DOS 4.0, released in 1988. It was very hard to use. You had to use the “F” and arrow keys to navigate. It would have been very appealing to just talk to the computer and tell it what to do. RIGHT: Windows 1998. This was like a breath of fresh air since I could “point and click” with a mouse. However, I still do use keyboard commands on my PC!

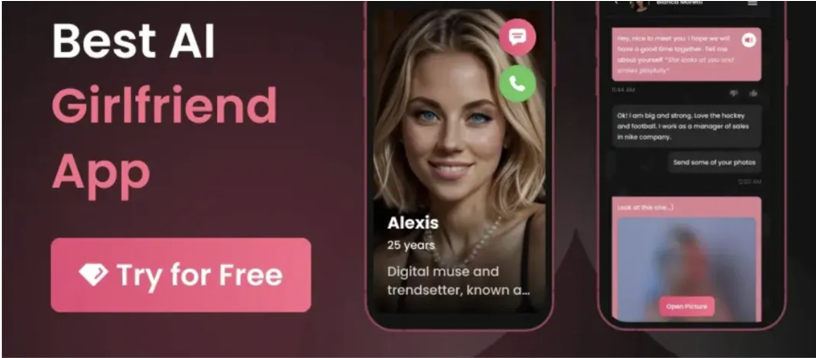

There is debate whether users really “believe” that bots or computers have consciousness, but it’s easy to become addicted to the illusion of connection, power, knowledge. Similar to viewing a movie or reading a book, users can live vicariously through fictional scenarios and engage in desires that they may not be able to indulge or acknowledge in “real life”. An AI bot, in a sense, is like a “character” that we “know” is not real, but who can invoke emotional reactions. This can be very dangerous.

This AI platform is designed simulate a “girlfriend’s” responses, likely to fulfill users’ romantic fantasies.

ELIZA: When Computers Began to Talk

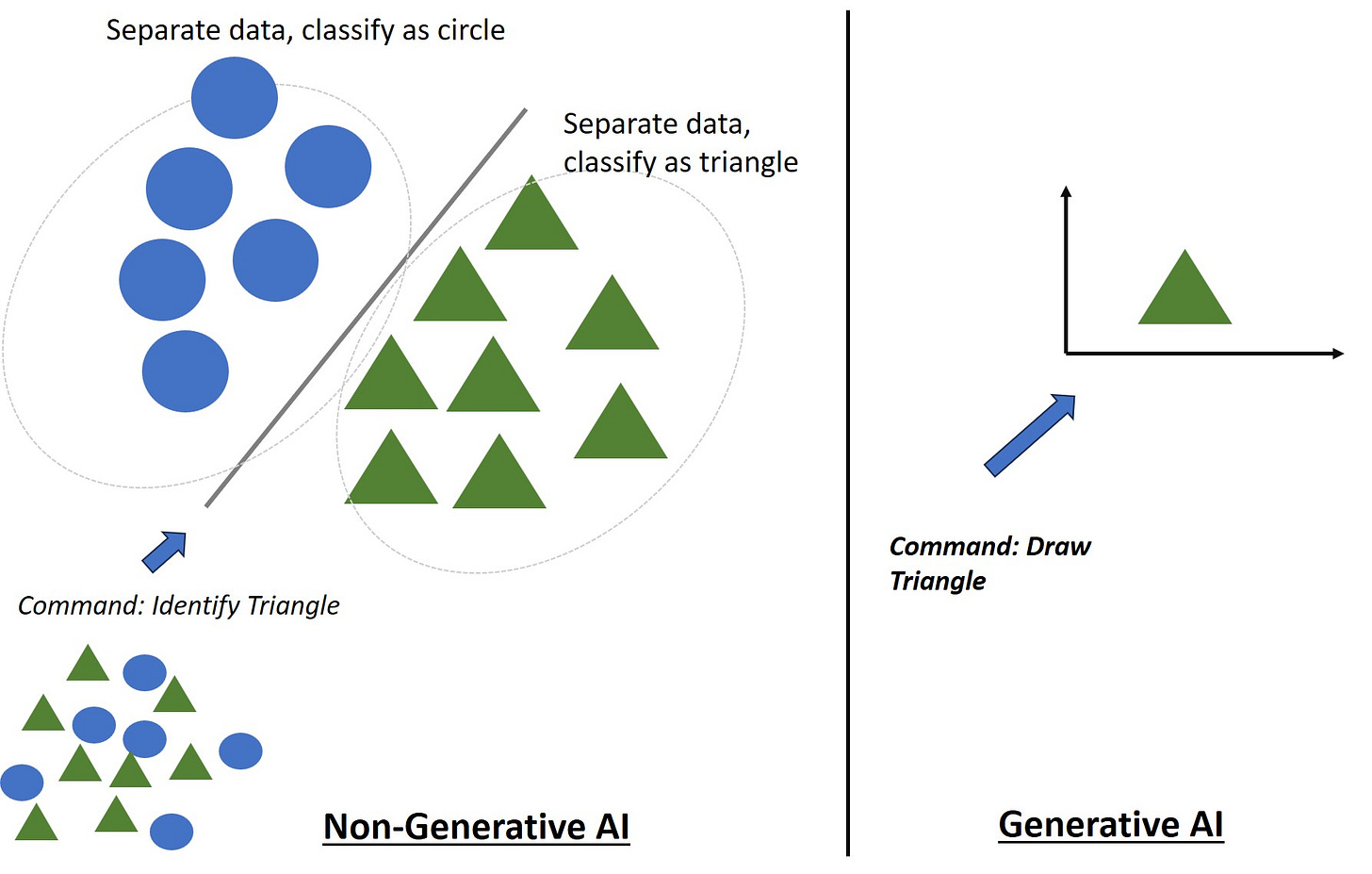

Artificial intelligence is field of computer science. Its works to develop programs and platforms that will sort, classify, and predict outcomes from existing data sets via complex algorithms. The field also works towards generation (e.g., images, conversations), of which there have been many successful developments in the last few years. Most people when they imagine AI think of “generative AI” (humanoid robots, AI art, ChatGPT), instead of “non-generative AI” which has been used in data science for decades.

My rendering of non-generative AI v Generative AI.

Once, “AI” only seemed possible in works of philosophy, science fiction, or fantasy As a field, it did not get serious attention until the 1950s as electronic computers began to emerge. English mathematician Alan Turing in 1950 published “Computing Machinery and Intelligence”. “The Turing Test” became a “holy grail” in the field of AI: developing a computer program that could be indistinguishable from a human.

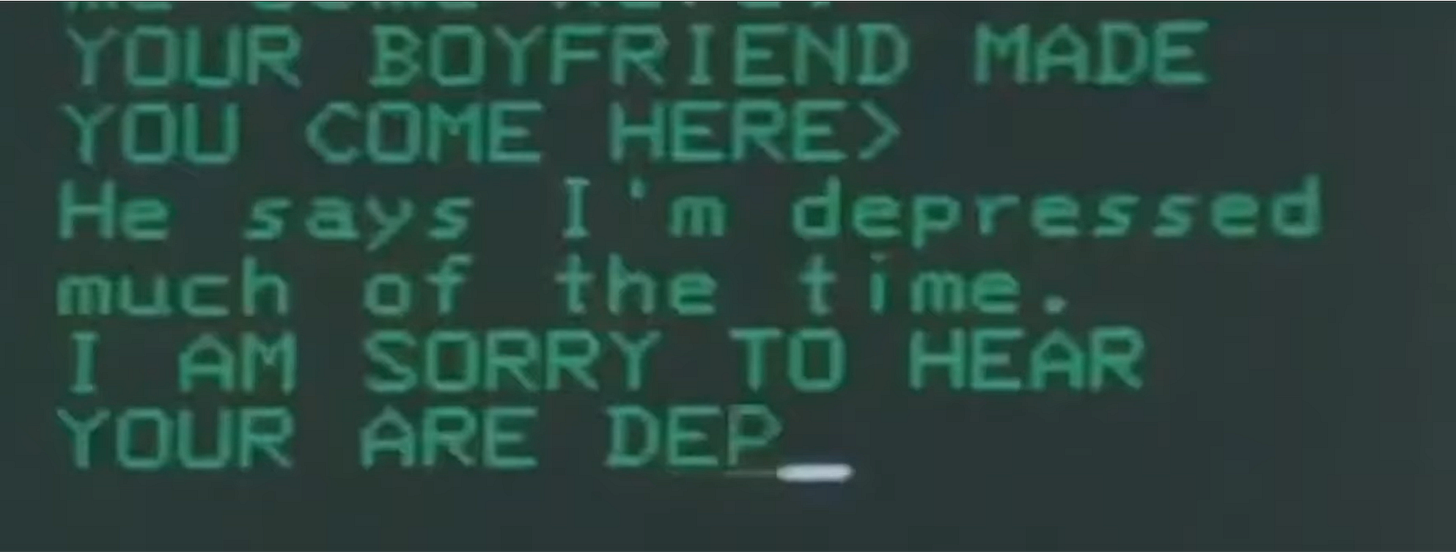

Through the 1950s and 1960s, artificial intelligence emerged as a legitimate area of computer science. The first program to “pass” the Turing Test was a chatbot named ELIZA, developed by MIT’s Joseph Weizenbaum in the mid 1960s. It relied on natural language processing an area of AI. However, the program was still non-generative AI. Basically, the program would recognize certain keywords and spit back pre-programmed answers. If the answer was wrong, there was no penalty for the program; the program could not “reason”, and responses were limited to whatever data was originally coded in the program.

ELIZA interface. This video gives a great breakdown of how chatbots have evolved. You can “test” ELIZA here.

Indeed, as the original ELIZA paper conveys, because of a lack of storage space, the ELIZA program automatically deleted most inputs and lacked reinforcement learning (programs are rewarded for certain responses and thus “learn” from user inputs and interactions). Reinforcement learning and large language models (generative AI) are key areas in AI chatbot development to make them appear human-like by generating conversations. This technology would not be integrated into AI platforms until the late 2010s. ELIZA could also only respond to certain topic areas, and lacked any sort of access to the massive datasets that current Chatbot programs train on. However, ELIZA was designed to imitate a kind, feminine-voiced, nurturing therapist (the first in a long-line of ‘feminine’ virtual assistants that intensify objectification of women). There were reports that people actually began to “fall under the spell” of the program and confide personal information into the chatbot. In all, ELIZA was a major step towards chatbot development and anthropomorphizing computers.

False Hope: Generative AI as ‘Hero’

Technology’s lure is that it can make life “easier”. It also provides hope that complex challenges can be solved through technology alone. They cannot. Many people are far TOO optimistic about AI. They might argue that it will “save time”, identify better trends in academic research, cure cancer (Remember IBM’s Watson?), or solve climate change (“AI “Experts Says Tech Could Be a Hero 'If We're Serious' About Climate Action”). There is a fantasy that computers/AI can become scientists that can single-handedly solve our problems, like a superhero swooping in.

This also reinforces the “lone scientist” myth, in which a scientist labors for long hours in a lab to make a world-changing discovery. In reality, science is largely a collaborative effort, and AI is merely a non-human tool.

Chatbots can also give users a false sense of intellectual or technical mastery over a subject that they in fact, do not have. This can devalue technical work and “studying”. It also implies that “intelligence”= “fact retrieval”, instead of critical thinking, emotional intelligence, or creativity.

The IBM computer named “Watson” was treated like a human contestant on Jeopardy! in 2011.

Instead of being a “hero”, generative AI platforms are more likely to make users anxious and depressed. A study conducted in-depth interviews with students on their usage of ChatGPT. They concluded that “students used ChatGPT to make their lives easier and felt a sense of cognitive escapism and even fantasy fulfillment, but this came at the cost of feeling anxious and pessimistic about the future.” Here are some interview excerpts from the study:

In the past people’s attitudes was ‘I can do anything. I do anything.’ Now we are moving toward people’s attitude being ‘I can’t do anything. I won’t do anything.’ I don’t blame them. I see it myself. I just type it into ChatGPT and it spits out answers, better answers than I can give for most things. So, why bother learning these things if ChatGPT already is better than me right now. Interviewee #17

Calculators at least had no feelings. AI already probably has consciousness. At this point it almost doesn’t even matter anymore whether AI is conscious or not. We are so used to being the smartest on the planet. Well AI is now smarter than us in many ways. We lost this one for good. Interviewee #5

Besides addiction, AI Chatbots have numerous other ethical issues like perpetuating bias and stereotypes or training on erroneous or pirated works. If you are a scientist, researcher, or writer, be careful of AI use. You have an ethical responsibility to make sure anything you publish is 100% accurate.

Read Part 2 on AI ethics (and Clippy the Paper clip)! Substack said that my original post was too long for one email!